Tokenization vs. Masking vs. Redaction: Which Protects AI Prompts Best?

Compare tokenization, masking, and redaction for AI prompt protection. See why deterministic tokenization preserves LLM reasoning while masking and redaction break it.

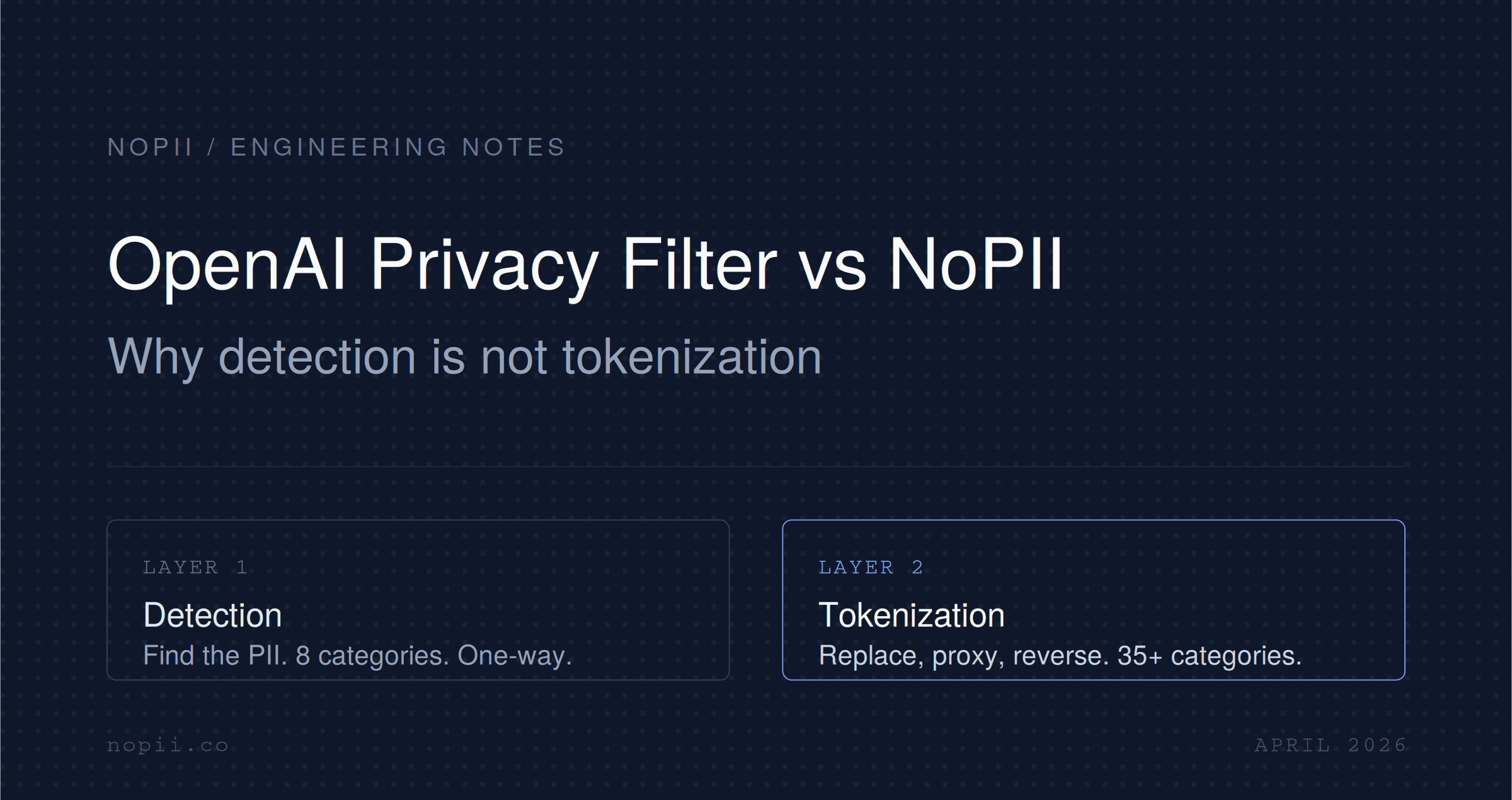

OpenAI just released Privacy Filter, an open-weight PII detection model. It is a useful tool. It is also not a replacement for a tokenization proxy, and the gap between the two is where real LLM privacy work lives.

On April 22, 2026, OpenAI released Privacy Filter, an open-weight model for detecting and redacting personally identifiable information (PII) in text, under an Apache 2.0 license on GitHub and Hugging Face. The model is small enough to run on a laptop and ships with solid benchmarks. It is a genuine contribution.

It is also being misread in a lot of places this week as an end-to-end privacy solution for LLM applications. It is not. Privacy Filter detects PII. What you do with those detected spans, whether you redact them, mask them, tokenize them, and whether you can ever get the original values back, is a separate problem. OpenAI did not solve that problem. It validated the category.

Here is the distinction that matters, and why NOI sits in a different layer of the stack entirely.

Privacy Filter is a bidirectional token classification model. It is pretrained autoregressively to arrive at a checkpoint with similar architecture to gpt-oss, albeit of a smaller size, then converted into a bidirectional token classifier over a privacy label taxonomy and post-trained with a supervised classification loss. In plain terms, it reads a sequence once, labels each token as PII or not, and returns spans.

The model is 1.5B parameters total, with only about 50 million active during use, which is why it runs comfortably on a standard machine. OpenAI reports 96% F1 on the PII-Masking-300k benchmark out of the box. The label taxonomy covers eight categories: account_number, private_address, private_email, private_person, private_phone, private_url, private_date, and secret.

That is the full scope. Detection in a single pass, eight categories, open weights, run locally.

The shipped examples redact by collapsing detected spans to a single placeholder label. That is the reference usage pattern OpenAI documents in the repo. Privacy Filter does not support configuring label policies dynamically at runtime; instead changing policies requires further finetuning of the model.

And, critically, OpenAI is explicit about the scope. Privacy Filter is a redaction and data minimization aid, not an anonymization, compliance, or a safety guarantee. That is OpenAI's own framing, not ours.

Detection is the first step in any PII pipeline. It is also the step the market has been inching toward for years. Presidio has done this. AWS Comprehend has done this. A dozen NER libraries have done this. OpenAI's contribution is a better model with stronger context awareness at a small footprint, which is real, but the novelty is quality, not category.

The hard problems in LLM privacy were never "can we find an email address in a string." They are:

Privacy Filter gives you a detector. You still have to build everything else. That is where most teams underestimate the scope.

NOI is a PII tokenization proxy for LLM APIs. It sits between your application and the LLM provider. You send a request through NOI. NOI detects PII, replaces each detected value with a deterministic, keyed token, forwards the tokenized prompt to the provider, and detokenizes the response back into real values before it reaches your user.

The provider never sees the original PII. Your application never handles detokenization logic. The mapping is persistent, so "John Smith" mentioned on message 1 is still the same token on message 40. And NOI covers OpenAI, Anthropic, Google, Mistral, and six more providers out of the box, including streaming.

That is a different shape of product. Privacy Filter is a model you run. NOI is a service that sits in the request path and guarantees a behavior.

This is the specific gap, mapped to what teams actually have to ship.

OpenAI's shipped examples redact irreversibly. The detected span becomes [REDACTED] or a category label, and the original value is gone. That is fine for training data sanitization, log scrubbing, or offline dataset preparation. It is not fine for any interactive application.

Consider a customer support agent built on an LLM. A user writes: "My name is Priya Shankar and my order ID is 78432. Can you tell me where my package is?" Irreversible redaction hands the model "My name is [PERSON] and my order ID is [ACCOUNT]. Can you tell me where my package is?" The model replies: "Hello [PERSON], your order [ACCOUNT] shipped yesterday."

You cannot show that response to the user. You have no way to restore "Priya" or "78432" because detection is one-way. To make this work you need a keyed token store, deterministic mapping, and a detokenization pass on the response. That is an architecture, not a feature.

NOI does exactly this. Privacy Filter does not, and to make it do this you would be building a parallel product.

A detection model labels each input independently. If "Priya Shankar" appears in message 1 and message 40 of a conversation, Privacy Filter will detect both, but there is no notion that they are the same person. If the downstream application uses different redaction placeholders, or if the model re-generates text mentioning the person, the identity reference is broken.

Multi-turn LLM applications need persistent entity tokens. The same person, the same account, the same case number, mapped to the same token for the life of the session or longer. That is not a model output. It is a state problem, and it needs a token store with a mapping policy.

Privacy Filter is a local model. You bring your own integration, and you wire it into each LLM call path yourself. If your product calls OpenAI, Anthropic, Gemini, and an open-source model on Together, that is four integrations, four places where the redaction logic has to live, four places where a bug means PII leaks to a provider.

NOI ships as a proxy. You change the base URL. Every existing SDK call starts flowing through tokenization and detokenization automatically. That covers nine providers today, with streaming, without application-level changes.

This is mundane from a research perspective and enormous from a shipping perspective.

Privacy Filter's taxonomy is eight broad categories. That is enough for a lot of general-purpose use cases. It is not enough for regulated environments.

Financial workflows need IBAN, SWIFT/BIC, routing numbers, ITIN, and tax IDs recognized as distinct entities, not lumped under account_number. Healthcare needs NPI, medical license numbers, MRN, and country-specific health identifiers like NHS numbers. Government-adjacent workflows need SSN, passport numbers, driver's license IDs by jurisdiction, and voter IDs. The distinctions matter because your compliance posture depends on them. An auditor does not want to hear that "the model tokenizes account numbers." They want to know that SSN, ITIN, IBAN, and NHS IDs are each recognized, tokenized with their own scheme, and audited separately.

NOI covers 35+ regulated identifier categories out of the box. Privacy Filter covers eight, and does not support configuring label policies dynamically at runtime; instead changing policies requires further finetuning of the model. If you want Privacy Filter to cover NHS numbers as a distinct category, you are training a model. If you want the same in NOI, it is already there.

This is the part that does not make headlines but is the entire reason enterprises actually sign.

Production LLM traffic is streamed. A tokenization proxy that cannot detokenize streaming responses is useless for real chat experiences. NOI handles streaming detokenization as a first-class path.

Enterprise privacy programs require audit trails: what PII was detected, when, for which request, with what token, resolved by which key. Privacy Filter gives you a model output. It does not give you an auditable trail of decisions tied to request identity.

Healthcare and regulated industries require a BAA. That is a contract and a compliance posture, not a model artifact. NOI offers this as part of the service. An open-weight model cannot.

Billing, rate limiting, request isolation per tenant, secret rotation for the tokenization key, these are all infrastructure concerns. They live at the proxy layer, not the detection layer.

Privacy Filter's core strength is that it is context-aware. It can detect a broader range of PII in unstructured text, including cases where classification depends on context. That is a real improvement over pattern-matching systems.

It is also slower per token than regex or a small NER model, and its category set is fixed at training time. For teams that want the quality of context-aware detection, NOI already runs a context-aware NER engine in the pipeline. The question then is not whether context-aware detection is useful, it is whether you want a standalone model you run yourself or a proxy that already uses one.

For teams with the engineering bandwidth to self-host and fine-tune, Privacy Filter is a good component to combine with a custom pipeline. For teams that want a privacy layer they can ship in a week, NOI is the faster path.

If you are an engineering lead evaluating this after the OpenAI privacy release, the right questions are not "which one is better." They are:

What are you actually trying to ship? If you are scrubbing a training corpus offline, Privacy Filter is a clean fit. If you are running a user-facing LLM application where responses need to contain real customer data, you need reversible tokenization, which means a tokenization system, not a detection model.

How many providers do you call? If you are building on a single provider and one call path, you can integrate Privacy Filter and a homegrown tokenization layer. If you are multi-provider and care about swapping or adding providers without rewriting privacy code, a proxy is the right shape.

What regulated categories do you handle? If eight general categories are enough, Privacy Filter is enough. If you need SSN, ITIN, IBAN, NHS IDs, medical license numbers, or jurisdiction-specific identifiers treated distinctly, you need a system with that taxonomy already built and a way to add more.

What compliance posture do you need? If you need BAA, audit trails, persistent token stores with key rotation, and tenant-level isolation, those are not model features. They are product features.

How much of this do you want to build yourself? Everything Privacy Filter does not do is buildable. The question is whether your team should spend the next two quarters building it, or ship on top of something that already solves it.

Privacy Filter is net positive for the category NOI operates in. Here is why.

First, it validates that PII handling in LLM pipelines is a real, distinct problem. A company of OpenAI's scale releasing a dedicated model for it is a signal that the privacy layer is not a side concern, it is infrastructure. Teams that had been deferring privacy decisions now have a reason to actually build the layer.

Second, it commoditizes the detection component. That is good for the tokenization category. Detection was always the piece that felt "solvable enough" to delay a full privacy architecture. Now that a high-quality detector ships for free, the conversation moves upstream to what really matters: reversibility, consistency, provider coverage, compliance. Those are product questions, not model questions.

Third, it raises the quality bar on detection. That pressure is useful. It means anyone shipping a tokenization product needs their detection layer to be at least as good as Privacy Filter. NOI's NER engine is already more flexible in category coverage and configurable in policy. The performance bar is the one we will be tracking.

The one thing Privacy Filter does not change is the shape of the problem for production LLM applications. You still need reversible tokens. You still need entity consistency. You still need provider coverage. You still need audit, billing, streaming, and regulated category depth. Those are NOI's product.

OpenAI shipped a good detection model and described it honestly as a redaction aid, not a privacy guarantee. The confusion is downstream, in how the release gets framed in secondhand coverage.

The correct read is this: detection got better and cheaper this week. Tokenization, detokenization, proxying, entity consistency, and compliance posture are a different layer of the stack, and that layer is still where the real work happens. NOI operates in that layer, and the OpenAI privacy release makes the case for that layer more obvious, not less.

If you are building an LLM application where your users' real data needs to enter and exit the system cleanly, you need more than a detector. You need an architecture that can give the data back.

OpenAI Privacy Filter is an open-weight, 1.5B parameter token classification model released under Apache 2.0 on April 22, 2026. It detects and labels personally identifiable information in text across eight categories, runs locally, and is designed for high-throughput PII detection and redaction workflows.

No. Privacy Filter detects PII spans. It does not provide reversible tokenization, persistent entity mapping, detokenization of LLM responses, or multi-provider proxying. Those are the core functions of a tokenization service like NOI, which sits in the LLM request path and restores real values on the response.

Redaction replaces sensitive values with a placeholder and discards the original. It is irreversible. Tokenization replaces sensitive values with keyed, deterministic tokens that can be mapped back to the original values using a secure store. Tokenization is required whenever the LLM's response needs to contain real user data, such as in customer support, healthcare, or financial applications.

The two are complementary, not mutually exclusive. Privacy Filter is a detection component. NOI is a full tokenization proxy with its own NER engine, token store, provider coverage, and compliance features. Teams that want to run Privacy Filter in their own pipeline and combine it with custom tokenization logic can do so. Teams that want a drop-in privacy layer for LLM APIs use NOI directly.

OpenAI Privacy Filter covers eight categories: person, email, phone, address, URL, date, account number, and secret. NOI covers 35+ categories, including regulated identifiers such as SSN, ITIN, IBAN, NHS numbers, medical license numbers, and jurisdiction-specific health and financial IDs, each with dedicated tokenization schemes.

Privacy Filter is a detection model, not a proxy, so the question does not apply directly. It classifies input text in a single pass. For streaming LLM responses where PII in the response needs to be detokenized as tokens arrive, you need a tokenization proxy in the request path. NOI supports streaming detokenization across its supported providers.

Compare tokenization, masking, and redaction for AI prompt protection. See why deterministic tokenization preserves LLM reasoning while masking and redaction break it.

Compare tokenization, masking, and redaction for AI prompt protection. See why deterministic tokenization preserves LLM reasoning while masking and redaction break it.

PII tokenization for AI is the process of replacing personally identifiable information in LLM prompts with deterministic, reversible tokens before the data reaches a model provider. The original values are stored securely in a vault. The model receives a sanitized version of the prompt that preserves structure and relationships, allowing it to reason effectively without ever seeing real sensitive data. When the response returns, the tokens are replaced with the original values before the application receives them.