Tokenization vs. Masking vs. Redaction: Which Protects AI Prompts Best?

Compare tokenization, masking, and redaction for AI prompt protection. See why deterministic tokenization preserves LLM reasoning while masking and redaction break it.

PII tokenization for AI is the process of replacing personally identifiable information in LLM prompts with deterministic, reversible tokens before the data reaches a model provider. The original values are stored securely in a vault. The model receives a sanitized version of the prompt that preserves structure and relationships, allowing it to reason effectively without ever seeing real sensitive data. When the response returns, the tokens are replaced with the original values before the application receives them.

This is not a theoretical concept. It is a production technique used by engineering teams in healthcare, financial services, legal, and enterprise SaaS to ship AI features without creating data exposure, compliance violations, or governance delays. If your application sends prompts to OpenAI, Anthropic, Google Gemini, or any other model provider, and those prompts contain names, email addresses, Social Security numbers, medical identifiers, financial account numbers, or any other sensitive information, PII tokenization is how you protect that data at the infrastructure level.

The core problem is straightforward. Large language models are hosted by third-party providers. When your application sends a prompt to one of these providers, the data in that prompt leaves your environment and enters infrastructure you do not control. For most casual use cases, this is not an issue. But the moment your prompts contain customer names, patient records, financial data, or employee information, you have created a data governance problem.

Research confirms this is not a theoretical risk. Nasr et al. (2023) demonstrated extractable memorization in production LLMs, showing that 16.9% of generated responses in their divergence attack contained memorized PII, with 85.8% of that PII being authentic. A 2026 study published in Nature Communications showed that LLMs exhibit "mosaic memory," assembling information from similar sequences to reproduce training data even when exact duplicates are removed. LayerX Security's 2025 enterprise report found that 77% of employees paste work data into AI tools, creating a massive surface area for sensitive data exposure.

Security teams see risk. Legal teams see exposure. Compliance teams see unanswered questions about data retention, cross-border transfers, and regulatory obligations under HIPAA, GDPR, PCI-DSS, SOX, and CCPA. Product teams see delay. Features stall in legal review. Projects that worked perfectly in prototype quietly die because nobody can sign off on the data handling.

PII tokenization solves this by ensuring sensitive data never leaves your environment in its raw form. The model provider receives tokens. Your application receives real data. The privacy layer is invisible to end users and requires no changes to your prompts, logic, or application architecture.

The process follows a clear sequence. Understanding each step matters because it explains why tokenization preserves model quality while other approaches degrade it.

Step 1: Your application sends a request as usual

Your application calls an LLM API the same way it always does. The only change is the base URL in your SDK client, which points to the tokenization proxy instead of directly to the model provider. No new SDK, no middleware, no changes to prompt templates or application logic. In the case of NOI, the integration is two lines of code: add base_url to your existing OpenAI client constructor. The request leaves your application in its normal format.

Step 2: Sensitive data is detected in real time

The tokenization layer inspects the prompt content before it leaves your environment. Using a combination of named entity recognition (NER) models, pattern matching, and custom recognizers, it identifies sensitive entities: names, email addresses, phone numbers, government IDs (Social Security numbers, passport numbers, driver license numbers), financial data (credit card numbers, bank account numbers, routing numbers), medical identifiers (medical record numbers, patient IDs, health plan numbers), and other configurable entity types. NOI uses Microsoft Presidio as its detection engine, with custom NER models layered on top. Detection happens in real time, on every request, before any data reaches the model provider.

Step 3: Values are replaced with deterministic tokens

This is where tokenization diverges from simpler approaches like masking and redaction. Each detected sensitive value is replaced with a deterministic token: a unique, consistent substitute generated from the original value. The critical property of deterministic tokenization is that the same input always produces the same token. If "John Smith" appears three times in a conversation, it maps to the same token every time. This preserves entity identity across the prompt, allowing the model to understand that references to the same person are, in fact, the same person.

The original values are stored securely in a tokenization vault. In the case of NOI, this is the Enigma Vault, which holds PCI Level 1 and ISO 27001 certifications. The vault maintains the mapping between tokens and original values, enabling detokenization on the response path.

Step 4: A sanitized request is forwarded to the model

The model provider receives a prompt that looks structurally identical to the original, but contains tokens instead of real sensitive data. The model processes the prompt normally. It reasons over the relationships, generates a response, and returns output. Because the tokens are deterministic and unique per entity, the model can track relationships ("Patient A was referred by Doctor B") without ever knowing who Patient A or Doctor B actually are.

Step 5: Responses are detokenized before reaching your application

If the model response contains any tokens (which it often will, since the model may reference tokenized entities in its output), the tokenization layer replaces them with the original values from the vault. Your application receives a response containing real data. The end user sees a seamless experience. The model provider never saw real PII. This round-trip detokenization is what makes the approach practical for production applications where users expect to see real names, real account numbers, and real data in the AI-generated response.

A practical tokenization system detects and tokenizes a broad range of entity types. NOI detects 10+ PII entity types automatically, including: full names (first, last, and combined), email addresses, phone numbers, government IDs (Social Security numbers, passport numbers, driver license numbers), financial data (credit card numbers, bank account numbers, routing numbers), medical identifiers (medical record numbers, patient IDs, health plan numbers), dates of birth, physical addresses, and IP addresses. Confidence thresholds are configurable per entity type, and custom entity types can be defined per tenant or use case through the admin console.

PII tokenization is frequently confused with masking and redaction. These are fundamentally different approaches with different trade-offs for LLM use cases.

Masking replaces sensitive data with generic placeholders. A name becomes [PERSON]. An email becomes [EMAIL]. The problem is that all names become the same [PERSON] placeholder. If your prompt mentions three different customers, the model sees [PERSON], [PERSON], and [PERSON]. It cannot distinguish between them. Multi-entity reasoning breaks down. This is the approach used by LiteLLM's Presidio-based guardrail, and it is common in AI gateways like Portkey.

Redaction removes sensitive data entirely. The value is stripped from the prompt and replaced with nothing or a generic marker like [REDACTED]. This is even more destructive than masking because the model loses not just entity identity but the entity itself. Redaction also prevents round-trip restoration: you cannot put back what you deleted. Nightfall AI's approach to LLM data protection is redaction-based.

Tokenization replaces each unique value with a unique, deterministic token. "Dr. Sarah Chen" might become "Dr. TKN_4829" and "Dr. Michael Torres" might become "Dr. TKN_7163." The model sees two distinct entities, understands the referral relationship, and can reason about it coherently. On the response path, the tokens are replaced with the original names. Your users see real data. The model never did. This is the approach used by NOI and Skyflow.

For LLM use cases where entity relationships matter (which is most production use cases), tokenization is the only approach that protects data without degrading output quality.

PII tokenization operates at the infrastructure layer, not the application layer. Application-level approaches (like modifying prompt templates to exclude sensitive data, or asking developers to manually scrub inputs) are fragile, error-prone, and do not scale. Infrastructure-level approaches apply protection automatically to all traffic, regardless of which application, prompt template, or developer generated the request.

The most effective architecture is a reverse proxy that sits between your application and the model provider. The application sends requests to the proxy. The proxy handles detection, tokenization, forwarding, and detokenization. The application does not need to know that tokenization is happening. This transparency means you can add PII protection to an existing LLM integration without changing your application code, prompt logic, or deployment architecture. NOI uses this proxy architecture, and it is why the integration requires only a base_url change.

PII tokenization directly addresses several regulatory requirements that affect AI deployments in regulated industries.

Under HIPAA, protected health information (PHI) must not be disclosed to unauthorized entities. If your LLM provider is not a covered entity or business associate, sending PHI to them in raw form creates liability. Tokenization ensures the provider never receives PHI. Under GDPR, personal data transfers to third countries require adequate safeguards. If your LLM provider operates outside the EU, sending personal data raises transfer risk under Schrems II. Tokenization means no personal data is transferred because tokens are not personal data. Under PCI-DSS, cardholder data must be protected in transit and at rest. NOI runs on PCI Level 1 certified infrastructure (Enigma Vault), which is the highest certification standard in payment card security. Under SOX, financial data must be auditable and controlled. NOI provides a full audit trail: every PII detection is logged with entity type, confidence score, session ID, provider, model, and timestamp.

In each case, the compliance argument is the same: if the sensitive data never leaves your environment, the regulatory obligation to the model provider does not arise.

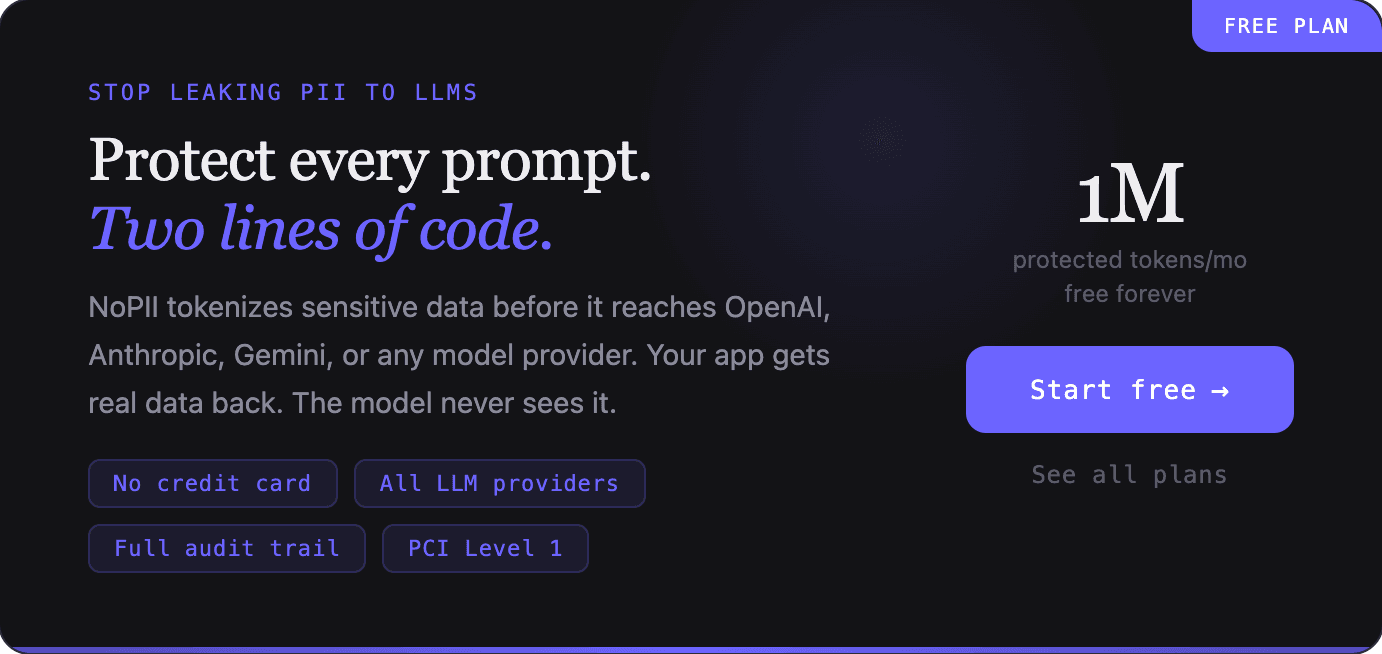

The fastest path to PII tokenization is a managed proxy service. With NOI, the entire integration consists of changing the base_url parameter in your existing OpenAI SDK client:

Before: client = OpenAI(api_key="sk-...")

After: client = OpenAI(api_key="sk-...", base_url="https://your-nopii-instance.com/v1")

That is the complete code change. Two lines. The proxy handles detection, tokenization, forwarding to the model provider, and detokenization of responses. It works with OpenAI, Anthropic, Gemini, xAI, DeepSeek, Mistral, Groq, Together, and Fireworks. It supports SSE streaming for both OpenAI and Anthropic APIs.

For teams that prefer to build their own solution, the core components are: a PII detection engine (Microsoft Presidio is the most common open-source option), a tokenization vault for storing mappings between tokens and original values, a reverse proxy for intercepting LLM API traffic, an admin interface for configuring detection rules and reviewing audit logs, and audit logging for compliance evidence. Building and maintaining all of these components typically requires 3 to 6 months of engineering effort, plus ongoing operational management. NOI provides all of them as a managed service with a free tier (1M protected tokens per month, no credit card required).

When evaluating tokenization solutions, assess whether the tokenization is deterministic (same input always produces same output), whether the solution supports round-trip detokenization, whether fail-safe behavior is default (NOI blocks requests if tokenization fails; this is not configurable and not a toggle), whether the solution integrates transparently without application code changes, whether it provides a compliance-grade audit trail, and whether it handles context phrase neutralization (replacing trigger terms like "SSN" with neutral labels to prevent LLM safety refusals on tokenized data). Context phrase neutralization is a feature unique to NOI among the products reviewed in this guide.

The market for PII protection in AI workflows is still emerging, and different vendors take meaningfully different approaches. Understanding the landscape helps teams evaluate their options with clarity.

Protecto offers a broad AI data privacy platform that covers prompts, RAG pipelines, training data, and agentic AI workflows. It uses a proprietary DeepSight detection engine and provides what it calls context-preserving masking. The trade-off is integration complexity: Protecto requires SDK integration or API instrumentation rather than a transparent proxy approach. Skyflow provides a general-purpose data privacy vault with an LLM Privacy Vault extension, offering deterministic tokenization through its polymorphic encryption architecture and data residency across 150+ countries, but the integration typically takes weeks.

Nightfall AI is a DLP platform that monitors and blocks sensitive data across SaaS applications, endpoints, browsers, and AI tools. Its Firewall for AI product uses redaction rather than tokenization, meaning it removes or replaces PII with generic placeholders. This protects data but degrades LLM output quality for multi-entity prompts and does not support round-trip detokenization. AI gateways like LiteLLM and Portkey offer PII masking as one feature among many, using placeholder approaches that collapse entity identity.

NOI takes a different architectural approach: a transparent reverse proxy that requires only a base_url change in the existing SDK client. Combined with deterministic tokenization backed by PCI Level 1 certified vault infrastructure (Enigma Vault), it occupies a distinct position in the market: the fastest path to compliance-grade LLM PII protection.

PII tokenization is not an abstract security concept. It is deployed in production across industries where AI features interact with sensitive data daily. Understanding where it applies helps teams assess whether their own workflows require this level of protection.

In healthcare, clinical documentation AI tools process physician notes, patient messages, and care coordination summaries. These documents routinely contain patient names, dates of birth, medical record numbers, diagnosis codes, and insurance identifiers. Without tokenization, every AI-assisted clinical note sends protected health information to a third-party model provider. With tokenization, the model receives the clinical context it needs while patient identifiers are replaced with deterministic tokens.

In financial services, AI-powered fraud detection systems analyze transaction patterns that include customer names, account numbers, routing numbers, and transaction amounts. Customer support chatbots process inquiries that contain account holder information, recent transaction details, and sometimes partial Social Security numbers. Wealth management applications use LLMs to generate client portfolio summaries that reference specific individuals and their financial positions. In each case, tokenization allows the AI to reason over the data relationships while keeping the actual financial identifiers within the institution's controlled environment.

In legal workflows, AI-assisted research and document review tools process contracts, depositions, court filings, and correspondence that contain party names, case numbers, attorney identifiers, and privileged communications. Attorney-client privilege does not survive sending case details to a third-party LLM in raw form. Tokenization preserves the document structure and entity relationships the model needs for analysis while ensuring that privileged information never leaves the firm's environment.

In customer support, AI tools summarize ticket histories, draft responses, and route inquiries based on content analysis. Every ticket contains customer names, email addresses, account numbers, and often partial payment information. Support teams need the AI to distinguish between different customers in a thread, track which agent handled which interaction, and generate responses that reference the correct customer by name. Tokenization makes all of this possible without exposing any customer PII to the model provider.

Compare tokenization, masking, and redaction for AI prompt protection. See why deterministic tokenization preserves LLM reasoning while masking and redaction break it.

OpenAI's Privacy Filter is a strong open-weight PII detection model, but it redacts irreversibly, covers only eight categories, and is not a proxy. Production LLM applications need reversible tokenization, persistent entity mapping across turns, multi-provider proxying, and regulated identifier coverage, none of which Privacy Filter provides. NOI operates in that layer of the stack, and the OpenAI release makes the case for that layer sharper, not weaker.

Compare tokenization, masking, and redaction for AI prompt protection. See why deterministic tokenization preserves LLM reasoning while masking and redaction break it.