Tokenization vs. Masking vs. Redaction: Which Protects AI Prompts Best?

Compare tokenization, masking, and redaction for AI prompt protection. See why deterministic tokenization preserves LLM reasoning while masking and redaction break it.

When engineering teams need to protect sensitive data in LLM prompts, they evaluate three approaches: tokenization, masking, and redaction. All three remove real PII from the prompt before it reaches the model provider. But they do so in fundamentally different ways, with dramatically different effects on the quality of the model output your application receives.

This guide compares the three approaches on the dimensions that matter most for production AI applications: data protection effectiveness, LLM reasoning quality, reversibility, compliance suitability, and implementation complexity. The analysis is based on direct testing against production LLM workloads and reflects the current state of available tools as of April 2026.

Every time your application sends a prompt to an LLM API, the content of that prompt travels to third-party infrastructure. If the prompt contains customer names, patient records, financial data, or employee information, that data is now outside your control. Research from Nasr et al. (2023) demonstrated that production LLMs can memorize and reproduce training data, including PII, through targeted prompting. A comprehensive IJCAI-25 survey on PII leakage in LLMs confirmed that sensitive data can be extracted through natural language prompts, template-based attacks, and adversarial divergence techniques.

For regulated industries, this creates liability under HIPAA, GDPR, PCI-DSS, SOX, and CCPA. LayerX Security's 2025 enterprise research found that 77% of employees paste work data into AI tools, meaning the exposure surface is not limited to purpose-built applications. Shadow AI usage compounds the problem.

The question is not whether to protect PII in AI prompts. The question is which protection method preserves the value of your AI investment while satisfying your security and compliance requirements.

Masking replaces each detected PII entity with a generic placeholder that indicates the entity type. A person's name becomes [PERSON]. An email address becomes [EMAIL]. A Social Security number becomes [SSN]. The placeholder tells the model what type of entity was present, but not its value. This is the approach used by LiteLLM's Presidio-based PII guardrail, and it is common in AI gateway products like Portkey that offer PII features as one of many guardrails.

Masking creates a significant problem for LLM reasoning: all entities of the same type become identical. Consider a prompt that says: "Sarah Johnson called about her account. Her manager, David Chen, approved the refund. Please summarize the interaction between Sarah Johnson and David Chen." After masking, this becomes: "[PERSON] called about her account. Her manager, [PERSON], approved the refund. Please summarize the interaction between [PERSON] and [PERSON]." The model cannot distinguish Sarah from David. It does not know who called, who approved, or who the summary should reference. In multi-entity scenarios, which represent the majority of production AI use cases, masking produces confused, inaccurate, or entirely unusable output.

Standard masking is not reliably reversible. Once a name is replaced with [PERSON], there is no vault-backed mapping to the original value. Some implementations maintain a lookup table for re-identification, but this is optional, fragile, and not secured by default. LiteLLM's output parsing for re-identification, for example, is documented as "limited and optional." The application typically receives [PERSON] in the response, not the original name.

Masking can work for single-entity prompts where identity is irrelevant. For example, classifying the sentiment of a customer review does not require entity identity. If your prompts never reference multiple people, accounts, or entities by name, masking may be sufficient. For most production applications involving customer support, healthcare, financial services, or legal workflows, this is not the case.

Redaction removes sensitive data from the prompt entirely. The value is stripped out and replaced with nothing, a blank, or a generic marker like [REDACTED]. Unlike masking, redaction does not even preserve the entity type in many implementations. Nightfall AI's Firewall for AI product uses a redaction-based approach for LLM data protection.

Redaction is the most destructive approach for LLM reasoning. It removes information the model may need to produce a coherent response. Consider a clinical note: "Dr. Sarah Chen referred the patient to Dr. Michael Torres at City General Hospital for a cardiology consultation." After redaction: "[REDACTED] referred the patient to [REDACTED] at [REDACTED] for a cardiology consultation." The model cannot determine who made the referral, who received it, or where it was directed. The relationships between entities are severed. For any task that requires the model to reason about relationships between people, places, organizations, or accounts, redaction produces fundamentally broken output.

Redaction is permanently irreversible. The data is gone. There is no vault, no mapping, no way to restore original values in the model response. Your application receives redacted output. If your users need to see real names, real account numbers, or real identifiers in the AI-generated response, redaction makes this impossible.

Redaction works for narrow use cases where the sensitive data is truly irrelevant to the task: classifying document types, detecting language, or performing tasks where only the non-PII content matters. In practice, these use cases are a small minority of production AI applications.

Tokenization replaces each detected PII entity with a unique, deterministic token. "Sarah Johnson" might become TKN_A7F2. "David Chen" might become TKN_B3E9. The critical properties are: each unique value gets a unique token (Sarah and David are different tokens), and the same value always produces the same token (Sarah is TKN_A7F2 every time she appears, including across multiple turns of a conversation). The original values are stored in a secure vault, enabling detokenization. This is the approach used by NOI and Skyflow.

The model receives a prompt with the same structure and relationships as the original, but with tokens instead of real data. Using the earlier example: "TKN_A7F2 called about her account. Her manager, TKN_B3E9, approved the refund. Please summarize the interaction between TKN_A7F2 and TKN_B3E9." The model can clearly distinguish the two people. It understands who called, who approved, and who the summary should reference. The output quality is comparable to what the model would produce with real data, because the structural and relational information the model needs for reasoning is fully preserved.

Tokenization is fully reversible. When the model response contains tokens, the tokenization layer looks up the original values in the vault and replaces the tokens before the response reaches your application. Your users see real names, real data, and a seamless experience. The model never saw any of it. NOI handles this detokenization automatically on every response, including in SSE streaming scenarios where tokens arrive incrementally.

Tokenization is appropriate for any LLM use case where entity identity and relationships matter in the model output. This includes customer support (tracking which customer, which account, which agent), healthcare (tracking which patient, which provider, which diagnosis), financial services (tracking which client, which account, which transaction), legal (tracking which party, which counsel, which filing), and HR (tracking which employee, which manager, which department). In practice, this covers the vast majority of production AI applications.

| Dimension | Tokenization | Masking | Redaction |

|---|---|---|---|

| Data protection | PII never reaches provider | PII never reaches provider | PII never reaches provider |

| LLM reasoning quality | Preserved. Entities distinct. | Degraded. Entities collapsed. | Destroyed. Entities removed. |

| Reversibility | Fully reversible (vault) | Rarely reversible | Never reversible |

| Entity identity | Unique token per entity | Same placeholder per type | No entity information |

| Multi-entity prompts | Works correctly | Breaks down | Fails completely |

| Round-trip detokenization | Automatic | Optional / fragile | Impossible |

| Compliance audit trail | Full metadata per detection | Basic logging | Basic logging |

| Implementation complexity | Requires vault + proxy | Simpler (NER + placeholder) | Simplest (NER + delete) |

| Products using this | NOI, Skyflow | LiteLLM, Portkey | Nightfall AI |

There is a subtle but important problem that affects tokenized prompts specifically. When PII values are tokenized but the contextual language around them is preserved, the prompt may still contain trigger terms that cause LLM safety filters to refuse the request. For example, if a prompt says "The patient's SSN is TKN_8472," the value is tokenized but the term "SSN" remains. Some models will refuse to process this prompt because their safety filters detect a request involving Social Security numbers, even though no actual SSN is present.

Context phrase neutralization solves this by replacing trigger terms ("SSN," "Social Security number," "credit card number") with neutral labels ("identifier," "reference number") so the model processes the prompt normally. Among the products reviewed in this comparison, NOI is the only one that implements context phrase neutralization. This is a practical consideration that teams often discover only after deploying tokenization in production.

Choose masking if your prompts contain only single-entity references, entity identity is irrelevant, you do not need round-trip restoration, and simplicity is your priority.

Choose redaction if the sensitive data is completely irrelevant to the AI task and you never need to reference it in outputs.

Choose tokenization if your prompts reference multiple entities of the same type, the model needs to distinguish between entities, your application needs to display real values in AI-generated responses, you operate in a regulated industry, and you need fail-safe behavior.

For healthcare, financial services, legal, customer support, HR, and most enterprise SaaS applications, tokenization is the only approach that protects data without compromising the value of the AI output.

The cost of choosing the wrong PII protection method compounds over time. If you deploy masking in production and discover multi-entity reasoning failures after launch, you face a choice between degraded AI output quality (which affects user trust and product value) and a migration to tokenization (which requires engineering time and testing).

Tokenization has a higher upfront implementation cost than masking or redaction because it requires a secure vault and detokenization infrastructure. But it avoids the migration costs, output quality issues, and compliance gaps that simpler approaches create downstream. For teams building production AI features in regulated industries, the total cost of ownership for tokenization is lower than for masking or redaction, because you avoid the rework.

The decision framework is simple: if you are building a prototype or a single-purpose tool where entity identity is irrelevant, masking or redaction will work. If you are building a production feature that users depend on, that handles multiple entities, that needs to display real data in responses, and that operates under regulatory oversight, tokenization is the correct choice from the start. Choosing it later costs more than choosing it now.

An often-overlooked dimension of the tokenization vs. masking vs. redaction comparison is what happens when the protection mechanism itself encounters an error. Detection engines are not perfect. Network connections to vaults can fail. Regex patterns can miss edge cases. The question is: when protection fails, does the system fail open (forwarding unprotected data to the model) or fail closed (blocking the request)?

This distinction matters enormously for regulated industries. If your tokenization system encounters an error and silently forwards raw PII to the model provider, you have a compliance incident. If it blocks the request, you have a temporary service degradation but no data exposure. The difference between these outcomes can be the difference between a normal operating day and a reportable breach.

Most masking and redaction implementations, particularly those built into AI gateways as one feature among many, offer configurable fail modes. The problem is that "configurable" means "someone must configure it correctly," and misconfigurations happen. A default-open setting that was appropriate for a development environment may accidentally persist into production.

NOI takes a different approach: if tokenization fails for any reason, the request is blocked. This is the default behavior. It is not configurable and cannot be overridden. PII never leaks, even during system errors. For compliance teams in healthcare, financial services, and legal, this default-closed posture is more defensible to auditors than a configurable setting that could be misconfigured.

Many teams start with masking because it is simpler to implement, then discover its limitations when they encounter multi-entity reasoning failures in production. The migration path from masking to tokenization depends on your current architecture.

If you are using an AI gateway with built-in PII masking (LiteLLM, Portkey), migrating to tokenization typically means adding a dedicated tokenization proxy upstream of your gateway. The proxy handles PII protection, and the gateway continues to handle routing and observability.

If you are using application-level masking (custom code that calls a masking function before the API call), migrating to a proxy-based tokenization solution like NOI means removing your custom masking code and changing the base_url in your SDK client. The proxy handles everything that your custom code previously handled, plus adds vault-backed tokenization, round-trip detokenization, fail-safe defaults, and audit logging.

If you are using Nightfall for DLP-based redaction, NOI and Nightfall can operate as complementary layers. Nightfall continues to provide broad DLP monitoring across SaaS and endpoints. NOI provides inline deterministic tokenization specifically for LLM API traffic.

In every migration scenario, the application-side changes are minimal because proxy-based tokenization is transparent to the calling application.

Before committing to a PII protection method, test it against your actual production prompts. The difference between masking, redaction, and tokenization becomes immediately obvious when you run real multi-entity prompts through each approach and compare the model outputs.

Start by selecting 10 to 20 representative prompts from your production workload. Choose prompts that contain multiple entities of the same type (multiple people, multiple accounts, multiple addresses). Run each prompt through each protection method and submit the protected version to your LLM provider. Compare the outputs on three dimensions: factual accuracy (does the model correctly attribute actions to the right entities?), relationship preservation (does the model understand who is connected to whom?), and response usability (would a human user accept this response as useful?).

In our testing across customer workloads, masking consistently fails on prompts with three or more entities of the same type. The model cannot distinguish between them, and the output degrades to generic, ambiguous responses. Redaction fails even more severely because the model loses the entities entirely. Tokenization preserves the relationships in every case we have tested, producing output that is functionally equivalent to what the model generates with real data.

This is not a theoretical exercise. Run the test before you choose a method. The results will make the decision obvious for your specific use case.

These questions come up frequently when teams are comparing PII protection methods for AI applications. The answers are based on our experience testing all three approaches against production LLM workloads and on publicly documented capabilities of the tools discussed.

If your current masking solution uses a proxy-based architecture, switching to a tokenization proxy like NOI may require only a base_url change. If your masking is implemented at the application level (inline code that calls a masking function before the API call), you would need to remove that code and route traffic through the tokenization proxy instead. In either case, the application-side changes are minimal because the tokenization proxy handles everything transparently.

Tokenization adds a small amount of latency for detection and token replacement on the request path, and for detokenization on the response path. In well-optimized implementations, this overhead is measured in low single-digit milliseconds for detection and tokenization. For most production applications, this is negligible compared to the LLM inference time itself, which typically ranges from hundreds of milliseconds to several seconds depending on the model and prompt length.

Masking is simpler to implement and may be sufficient for use cases where entity identity does not matter. Sentiment classification, language detection, document categorization, and other tasks that operate on content structure rather than entity relationships can work with masked data. If you are prototyping quickly and do not yet have compliance requirements, masking offers a faster path to basic protection. However, most teams that start with masking eventually encounter multi-entity reasoning failures and migrate to tokenization.

AI gateways like LiteLLM and Portkey offer PII masking as one feature among many (routing, cost management, observability, and dozens of other guardrails). Masking is simpler to implement because it does not require a secure vault for storing token mappings. Building deterministic tokenization with vault-backed round-trip detokenization is a significantly more complex engineering effort. Gateway products prioritize breadth of features over depth of PII protection. Dedicated tokenization solutions like NOI prioritize depth of PII protection as their core product.

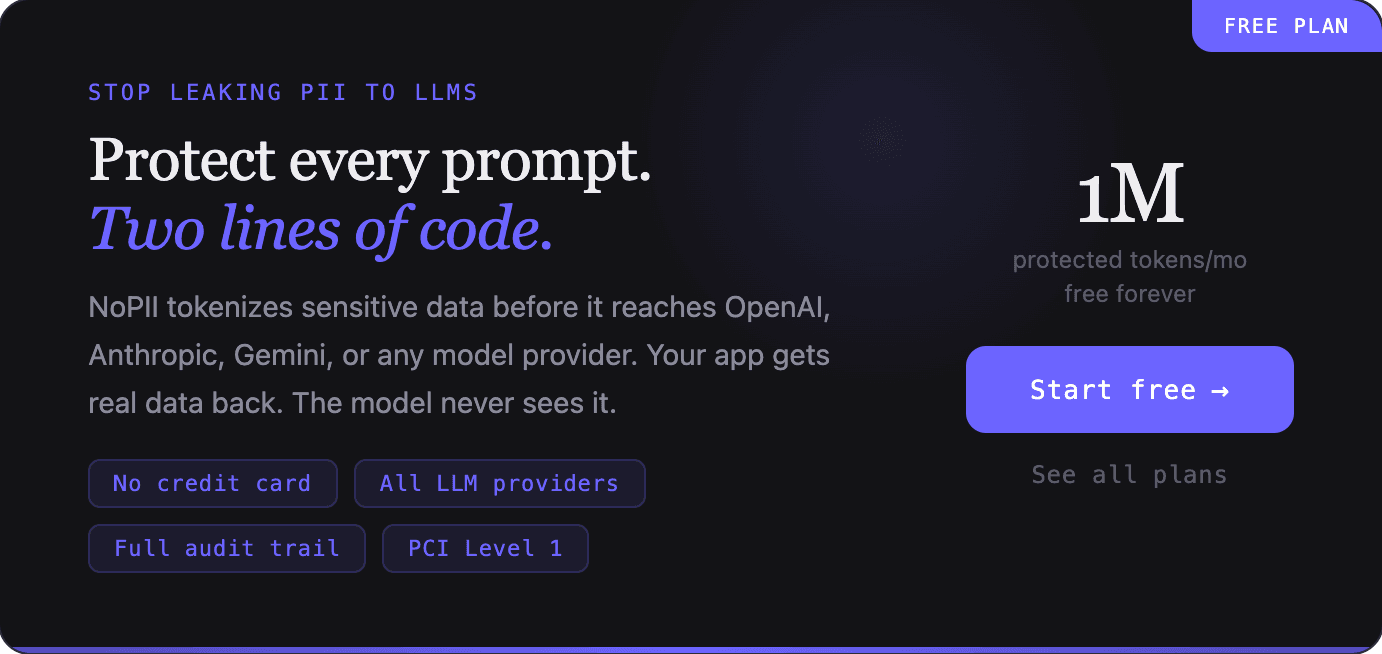

Building a production-grade tokenization system requires assembling a PII detection engine (Presidio or equivalent), a secure tokenization vault with compliance certifications, a reverse proxy with streaming support, an admin console, and audit logging. This typically requires 3 to 6 months of engineering time and 2 or more engineers for ongoing maintenance. NOI offers this as a managed service with a free tier (1M tokens/month) and a Pro tier at $50/month plus $1 per million tokens. For most teams, the managed service is both faster and more cost-effective than building in-house.

NOI provides a full audit trail for every PII detection, logging the entity type, confidence score, session ID, provider, model, and timestamp. The admin console includes a live detection preview where you can test prompts and see exactly what gets detected and tokenized before deploying to production. Audit logs are searchable, filterable, and exportable to CSV for compliance reporting.

Compare tokenization, masking, and redaction for AI prompt protection. See why deterministic tokenization preserves LLM reasoning while masking and redaction break it.

OpenAI's Privacy Filter is a strong open-weight PII detection model, but it redacts irreversibly, covers only eight categories, and is not a proxy. Production LLM applications need reversible tokenization, persistent entity mapping across turns, multi-provider proxying, and regulated identifier coverage, none of which Privacy Filter provides. NOI operates in that layer of the stack, and the OpenAI release makes the case for that layer sharper, not weaker.

PII tokenization for AI is the process of replacing personally identifiable information in LLM prompts with deterministic, reversible tokens before the data reaches a model provider. The original values are stored securely in a vault. The model receives a sanitized version of the prompt that preserves structure and relationships, allowing it to reason effectively without ever seeing real sensitive data. When the response returns, the tokens are replaced with the original values before the application receives them.